Third-Person Visual Imitation Learning via

Decoupled Hierarchical Controller

We study a generalized setup for learning from demonstration to build an agent that can manipulate novel objects in unseen scenarios by looking at only a single video of human demonstration from a third-person perspective. To accomplish this goal, our agent should not only learn to understand the intent of the demonstrated third-person video in its context but also perform the intended task in its environment configuration. Our central insight is to enforce this structure explicitly during learning by decoupling what to achieve (intended task) from how to perform it (controller). We propose a hierarchical setup where a high-level module learns to generate a series of first-person sub-goals conditioned on the third-person video demonstration, and a low-level controller predicts the actions to achieve those sub-goals. Our agent acts from raw image observations without any access to the full state information. We show results on a real robotic platform using Baxter for the manipulation tasks of pouring and placing objects in a box.

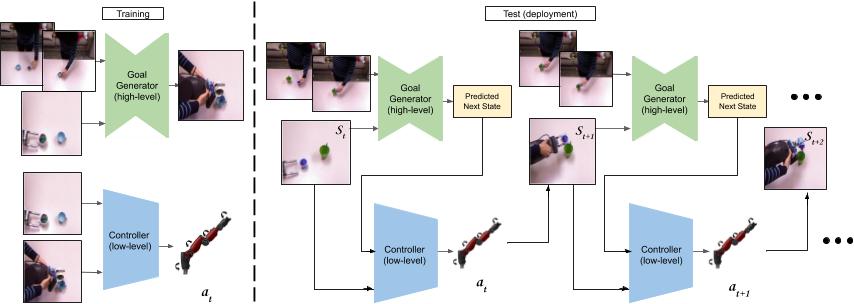

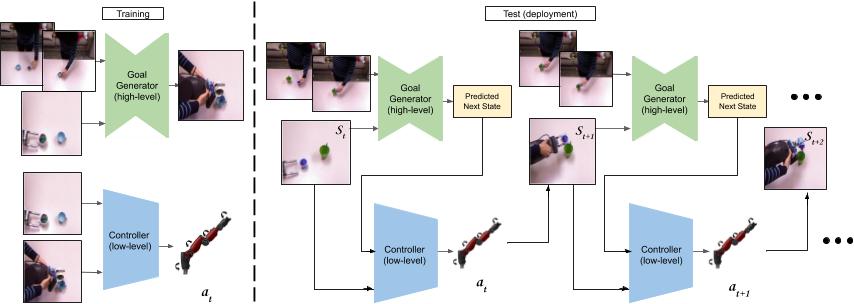

Decoupled Hierarchical Control

Our hierarchical approach consists of a goal generator that generates a goal visual state which is then used by the low-level controller as guidance to achieve a task. [Left] During training, the decoupled models are trained independently. The goal generator takes as input the human video frames ht and ht+k along with the observed robot state st to predict the visual goal state of the robot at t+k. The low level controller is trained using st ,at ,st+1 triplets. [Right] At inference, the models are executed one after the other in a loop.

Source Code and Environment

We have released the PyTorch based implementation on the github page. Try our code!

Paper and Bibtex

[Paper]

[ArXiv]

[Paper]

[ArXiv]

|

|

Citation

Pratyusha Sharma, Deepak Pathak, Abhinav Gupta. Third-Person Visual Imitation Learning via Decoupled Hierarchical Controller

In NeurIPS 2019.

[Bibtex]

|

|

|

|

@inproceedings{sharma19thirdperson,

Author = {Sharma, Pratyusha and Pathak, Deepak

and Gupta, Abhinav},

Title = {Third-Person Visual Imitation Learning via

Decoupled Hierarchical Controller},

Booktitle = {NeurIPS},

Year = {2019}

}

|

Acknowledgements

We would like to thank David Held, Xiaolong Wang, Aayush Bansal, and members of the CMU visual learning lab and Berkeley AI Research lab for fruitful discussions. The work was carried out when PS was at CMU and DP was at UC Berkeley. This work was supported by ONR MURI N000141612007 and ONR Young Investigator Award to AG. DP is supported by the Facebook graduate fellowship.

|